For this example the code is placed in one scriptable task. For production purpose it is mor sensible to split it in several actions & workflows.

- Input Parameter: VM [VcVirtualMachine]

setting interval:

var end = new Date(); // nowLook at the logged time stamps. They are in UTC and later on also in graph. If you want to adjust this to client time, you have to recalc the time stamp for CSV using Date.getTimezoneOffset().

var start = new Date();

start.setTime(end.getTime() - 3600000); // 1h before end

System.log (end.toUTCString());

System.log (start.toUTCString());

create querySpec (here for only one VM)

var querySpec = new Array();create perfMetricId for one metric (CPU average in MHz) and call perfManager

querySpec.push(new VcPerfQuerySpec());

querySpec[0].entity = VM.reference;

querySpec[0].startTime = start;

querySpec[0].endTime = end;

querySpec[0].intervalId = 20; //or use 300 for 5 minute stepping

var PM = new VcPerfMetricId();Now the array CSVs contains one VcPerfEntityMetricCSV object - we only called one - nevertheless i will iterate over CSVs so you can reuse the code

PM.counterId = 6; //6 = cpu.usagemhz.average

PM.instance = ""; // no instances

var arrPM = new Array();

arrPM.push(PM);

querySpec[0].metricId = arrPM; //assign PerfMetric to querySpec

querySpec[0].format = "csv";

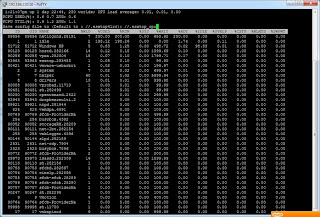

var CSVs = VM.sdkConnection.perfManager.queryPerf(querySpec);

Part II - join data and time stamps in a CSV file

for (i in CSVs)

{

var CSV = CSVs[i];

var Temp = CSV.sampleInfoCSV.split(",");

var Sample = Array();

for (j in Temp)

{

if (j % 2 != 0)

//only use odd entries, they contain the sample time - even ones contain interval

{

Sample.push(Temp[j]);

}

}

var Values = CSV.value[0].value.split(","); // the MHz values

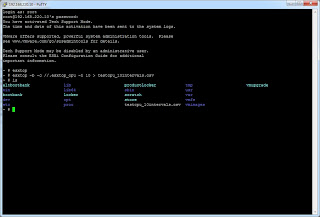

var BaseName = "C:/Test/" + workflow.id;Using workflow.id to build the file names makes them individual. So you can call the workflow parallel without having duplicate file names.

var CSVname = BaseName + ".csv";

var ControlName = BaseName + ".control";

var PNGname = BaseName + ".png";

var FW = new FileWriter(CSVname);

FW.open();

FW.lineEndType = 1;

for (j in Sample)

{

FW.writeLine(Sample[j] + "," + Values[j]);

}

FW.close;

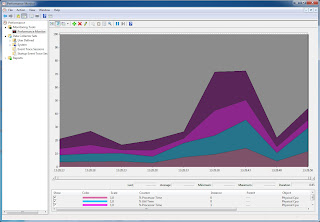

Part III - generate graph

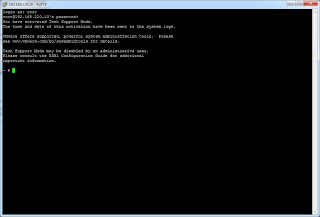

To do this there are some requirements:- enable local execution for vCO

- download gnuplot and unzip - in this example it is unzipped to C:\test\gnuplot

Pay attention on the single quotation marks. We have to mix them up to get the double ones in control file.

var FW = new FileWriter (ControlName);

FW.open();

FW.lineEndType = 1;

FW.writeLine ('set datafile separator ","');

FW.writeLine ('unset key');

FW.writeLine ('set title "performance data ' + VM.id + ' [' + VM.name + ']' + '"');

FW.writeLine ("set terminal png");

FW.writeLine ('set output "' + PNGname + '"');

FW.writeLine ("set xdata time");

FW.writeLine ('set timefmt "%Y-%m-%dT%H:%M:%SZ"');

FW.writeLine ('set format x "%H:%M:%S"');

FW.writeLine ("set xtics rotate");

FW.writeLine ('set ylabel "MHz"');

FW.writeLine ('plot "' + CSVname + '" using 1:2 wi li');

FW.writeLine ("quit");

FW.close;

var cmd = "cmd.exe /c C:\\Test\\gnuplot\\binary\\gnuplot.exe " + ControlName;

System.log (cmd);

var CMD = new Command(cmd) ;

var Result = CMD.execute(true);

System.log ("GNUplot: " + Result);

}

That's all - feel free to leave a comment - regards, Andreas